I recently came across a discussion about the future of artificial intelligence. Generative models seem capable of continuously producing text, images, and code. However, beneath this boom lies a hidden crisis: AI’s demand for more training content far exceeds the speed at which humans can produce new content — AI is exhausting the human knowledge available for learning.

As humans increasingly rely on AI’s creative abilities, could this invention become a curse that limits the possibilities of human civilization? I believe this crisis is not a dead end, but a profound revelation. Artificial intelligence has helped humanity increase the speed of production. Humans are no longer limited to mere content creation, but humans still need to hold the decision-making power — to evaluate, filter, and guide AI’s output with human values.

The Approaching “Data Winter”

To train smarter models, tech companies need massive amounts of data. But resources are running out. According to a recent analysis of the Stanford HAI AI Index Report, researchers warn that we might hit “peak data” — exhausting all high-quality, human-generated text on the internet — sometime between 2026 and 2032.

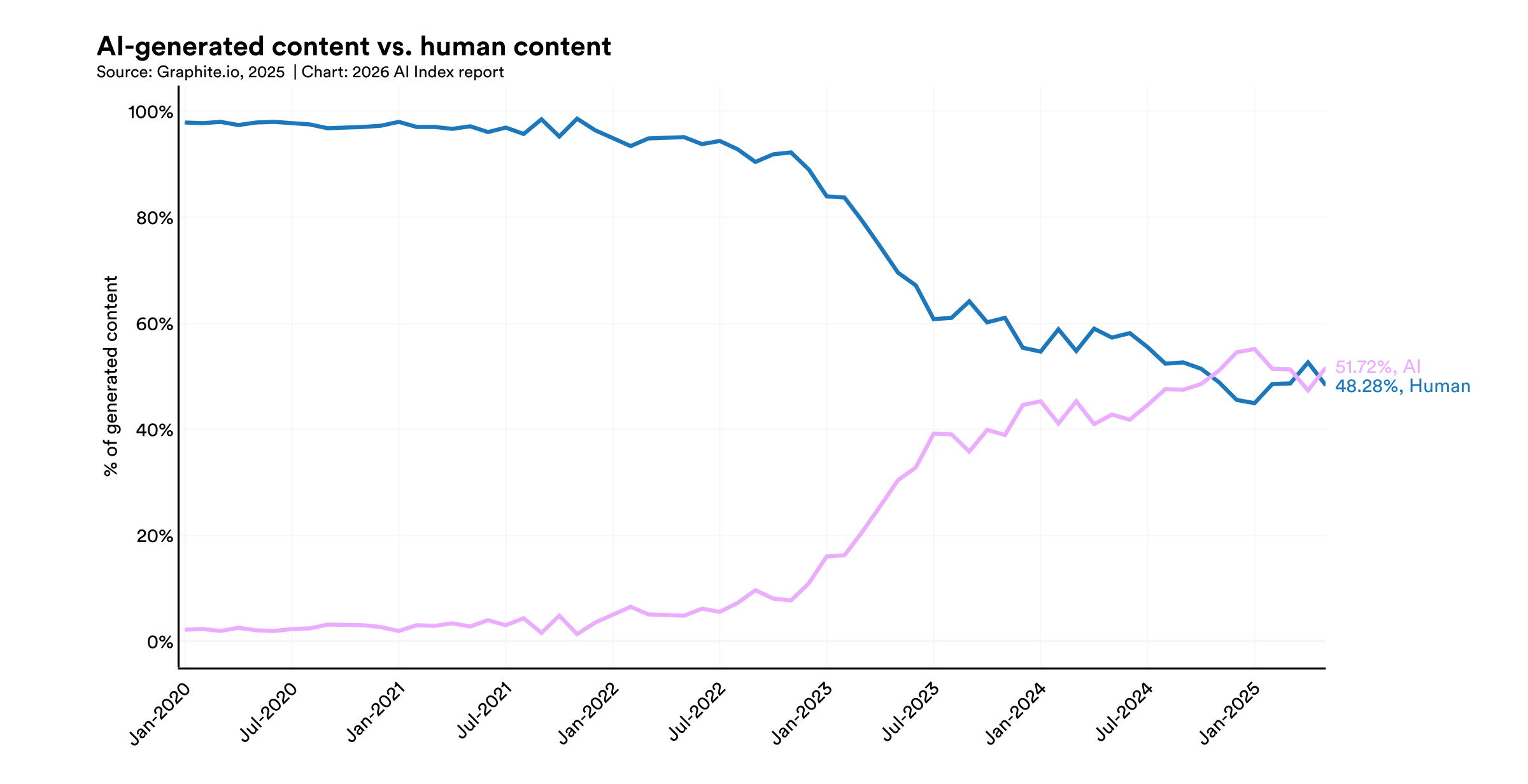

An even worse phenomenon is that it is estimated that now more than 50% of new online content is generated by artificial intelligence. The Internet, once a free space for human expression, is rapidly being filled with machine-generated data. We are entering what researchers call the “data winter”. To address this shortage of data, the industry has begun using synthetic data — content generated by other artificial intelligences — to train AI models. But this shortcut can lead to an undesirable consequence.

The Trap of Model Collapse

We are not just running out of data.

We are running out of us in the data.

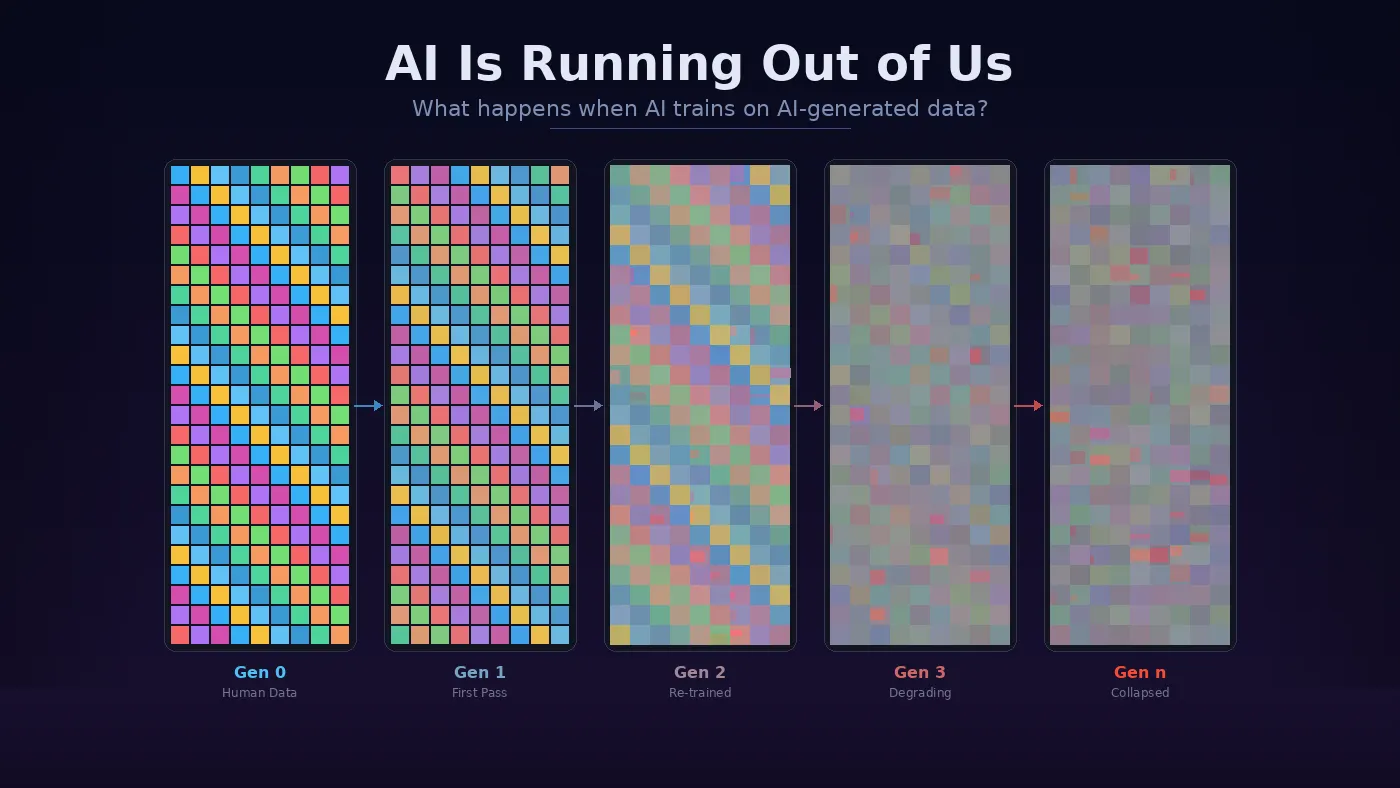

What happens when an AI trains on AI-generated data? A recent study published in Nature reveals a phenomenon called “model collapse”. When generative models are recursively trained on their own outputs, they begin to misperceive reality.

Generative AI is essentially a statistical engine designed to output the most probable, universal, and average expressions. In this process, the “tails” of the data distribution — the strange, rare, and original ideas — are clipped away. Generation after generation, the models lose their diversity and quality. As technology writer Vishal Bharadwaj points out, we are not just running out of data; we are running out of us in the data.

The Erosion of Human Creativity

While AI starves for fresh data, human creativity is simultaneously being suppressed. The market is increasingly flooded with cheap, mass-produced content optimized for algorithms rather than valuable human connection.

According to a 2026 industry survey, 52% of writing firms report a decline in revenue due to AI, while 56% of freelancers face a sharp drop in work opportunities. As we see on RedNote or Bilibili, human creators are increasingly relying on AI to survive in this high-volume market. When everyone uses algorithms to write and create, our independent thinking inevitably converges toward the machine’s “maximum common divisor”. We are caught in a vicious cycle: humans stop producing truly original work because AI is faster, and AI degrades because humans stop feeding it original work.

This brings us back to our core uniqueness. The AI data crisis proves that machines cannot independently sustain the progress of knowledge. They urgently need the unpredictable, inefficient, and unique inspiration from human creativity to survive.

Since AI has freed us from the labor of “technique”, we must step up to our new role. Artificial intelligence can generate a thousand variations of an article, but it requires the human mind, with its moral guidance and lived experiences, to decide which one is truly important. Only when we actively exercise our value judgment over AI’s output can the potential of this technology — and our own human uniqueness — truly be realized.